Why I’m Writing This

Late last year the CEO of OpenAI unilaterally decided to make its ChatGPT technology available to anyone, anywhere. The reaction to this act was straight out of Extraordinary Popular Delusions and and the Madness of Crowds: Technology corporations initiated stampedes to include Artificial Intelligence or AI in anything and everything they sold. I’ve seen some pretty amazing sights in the 60+ years I’ve worked and played with technology, but this was something else.

I experimented with ChatGPT1 a bit and got it to write a couple of dull and predictable stories, and asked it about a friend of mine. It credited him with a book he did not write (because it did not exist) and informed me he was dead, which was news to both him and me. I started a new session, asked again, and got a completely different set of wrong answers. I read a number of other accounts of ChatGPT’s behavior as they appeared on the internet, and I was reminded of something:

Prior to her death my mother had suffered from late stage, non-Alzheimers dementia. If you asked her what she had done the previous day she would tell you how she had driven in her little red car to pick up an old friend and then gone to lunch at her favorite diner. Only if you knew her would you have known that the little red car had been the Chevrolet she drove in 1928, the friend had died the previous year, and the diner had been replaced with a gas station in 1950. If you asked her again a moment later she would tell you another equally plausible and equally fictional story. When I compared my experiences with my mother with those with ChatGPT I concluded that it should be called “Artificial Dementia” instead of “Artificial Intelligence”

This conclusion was sufficiently at odds with that of AI enthusiasts2 and, having some time on my hands, I decided to dive a little deeper into ChatGPT and the other AI products that have popped up lately. My goal here is to share what I’ve learned in a way that I hope will be intelligible to the nontechnical reader, to help those readers understand how contemporary AI works and thus betters assess how AI could be used by them and what reasonable concerns or realistic expectations to have.

Some of my earliest memories as a toddler are of watching massive steam locomotives roll past the back fence of our house in Nevada. From that day to this I have been fascinated by complex systems and devoted my professional energies to studying how they work, how they are built, and how they fail3. That is the systems engineering perspective from which I view the current crop of AI offerings.

I’m going to start by talking a bit about systems engineering, and then move on to current AI products. When I need to get specific, I will use ChatGPT operating on English language text. I’ll then finish up with some observations on where I think we are headed. My intent throughout is to inform, not to advocate.

Systems Engineering

The “systems” part of systems engineering deals with aggregations of interacting entities, some of which may be tangible (like aircraft), some intangible (like flight control software). The object is to get these disparate elements to work together, and, more importantly, work with outside entities that are not under its control, like a human aircrew. The last aspect adds a whole new dimension to the problems of design.

The system that taught me what happens when you have humans in the loop, 1961.4 The computer is a Burroughs 220. I started on Stanford’s IBM 650 a couple of years before.

The “engineering” part of systems engineering comes into play when you make a product and charge money for it. I will argue that you have a moral and in some circumstances legal obligation to exhibit applied ethics,5 and accept responsibility for what you make and build it right to the best of your ability. That means you think hard about what you are going to do before you inflict it on the public.

The people who built the Colosseum in Rome didn’t just pile limestone blocks on top of one another and then fill it with people to see what would happen. They proceeded in an organized way, based on the experience gained from earlier buildings. That’s the reason you can walk through what’s left of it two thousand years later.

In the past, these two aspects often came together in a document called a Concept of Operations, or Conops6. These documents were used to capture the objectives, operation, and risks of a proposed system in a manner that enables informed examination and comment before implementation and deployment. This way of proceeding has fallen out of favor with the rise of the Silicon Valley culture, which minimizes up-front cost, maximizes early cash flow, and uses society as a laboratory. As one critic put it:7

The “build first, ask questions later” philosophy, the “move fast and break things” ethos; the mandate to grow your platform at all costs then try to figure out ways to manage it, long after the Nazis have moved in; the unicorn-or-bust mentality that says nothing is worthwhile if the market cannot scale to world domination; these are all byproducts of a system that starts with a venture capital-led model of developing technology.

I was therefore not surprised to find that there was no “official Conops” for ChatGPT, at least as of May 2023. I know this because that’s when I asked it if there was one for itself and it said no.

Later, I asked it why.8 It said it may not “be ready to produce a formal and detailed Conops document,” “have a clear and explicit logic or algorithm behind its responses,” “have a specific or predefined purpose,” or “have a fixed or limited set of . . . use cases.”

The main excuse it gave was “ChatGPT is a chatbot that aims to provide a fun and interactive way for users to explore the capabilities and limitations of artificial intelligence.”

Except that the Silicon Valley culture clearly has bigger plans for it and similar products than just a digital lab rat or online game. So I think it is appropriate for me to analyze them by the old-fashioned method of sketching a simplified Conops, using a familiar format: objectives, operation, and limitations. Then I hope to show you the value of such an exercise by using it to provide an assessment of benefits and risks at the present time.

Conops: Objectives

The first principle of leadership that the Air Force drilled into me was “Maintenance of the Objective.” This assumes you have an objective to maintain, and the beginning of Conops commonly explain why a particular system is needed or even desirable. Very often the objectives discussion brings up “opportunity cost,” which is the hidden cost of not doing something, or, in this case, the consequence of spending effort and money on AI instead of something else. The Silicon Valley culture depreciates discussions of opportunity cost because it may induce hesitancy on the part of investors, so we are left with statements of objectives without any investigation of absolute or comparative need.

For example, on their public website, OpenAI says this9:

Creating safe AGI that benefits all of humanity

AGI stands for Artificial General Intelligence, and is defined by OpenAI as10:

. . . a system that can solve human-level problems.

AGI is one of those distant and abstract concerns like The Singularity, Effective Altruism, and Longtermism that would have energized John W. Campbell and his followers.11 My attitude toward these is summed up by what one of my teachers12 said when asked about an equally remote set of issues:

Those are interesting speculations, but the real question is: what are you going to do with your next breath?

Setting aside the interesting speculations, we are left with a venture-capital funded corporation whose clear object is to make their backers as much money as possible.13

Given that, let’s take a look at what they are using to achieve that objective.

Conops: Operation

There are two aspects to the operation of the current AI products: a mathematical model and a mechanization of the model in hardware and software. I will treat each of these in turn.

The Mathematical Model

The current AI movement is based primarily but not exclusively on what they call a Large Language Model (LLM). In order to understand what they mean by this, I need to talk a bit about mathematical models.

There are two kinds of such models, which can be roughly characterized as algorithmic or statistical. Algorithmic models, such as Alan Turing’s model of embryonic growth, contain mathematical formulae to reflect the internals of the entity being modeled. Statistical models use historical patterns to predict, with greater or lesser accuracy, what the object being modeled will do next. An example of a statistical model in graphic form is given below:

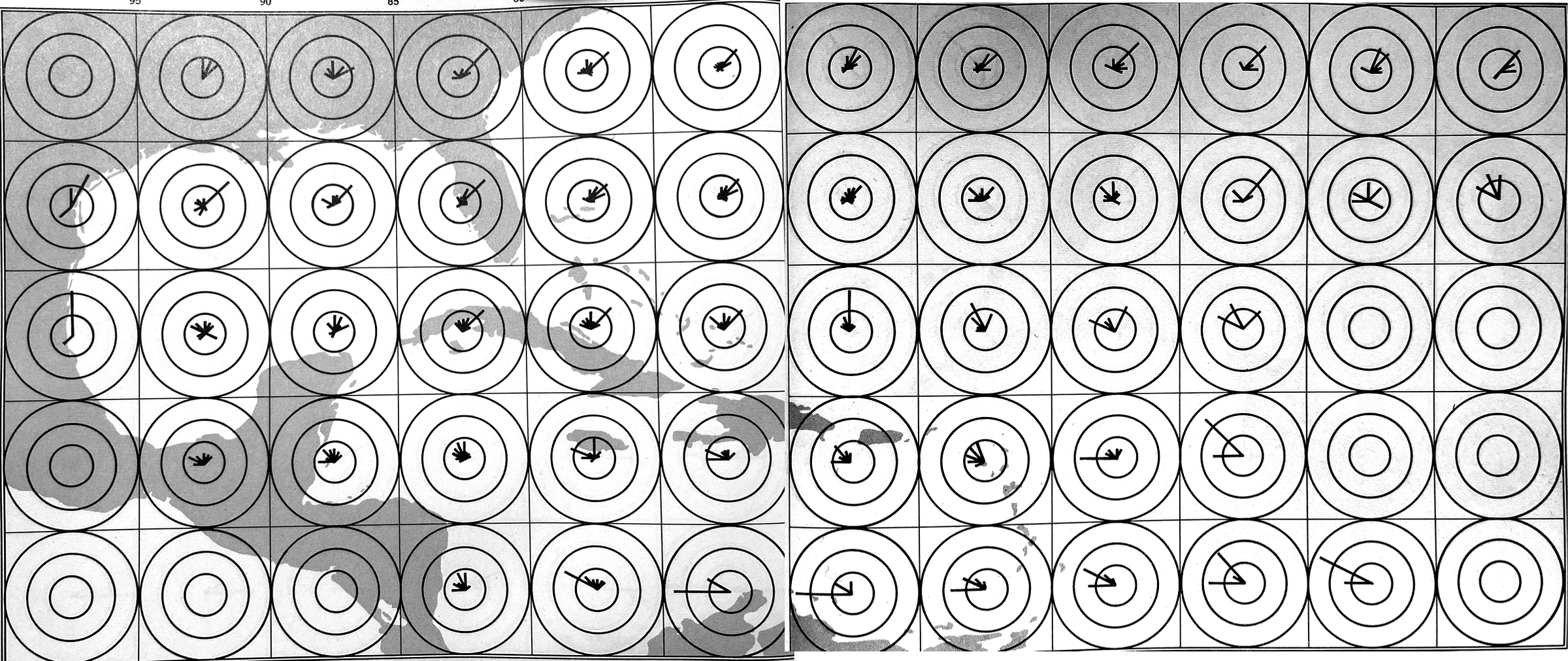

This model14 gives the likelihood that a hurricane in a particular square will move in a particular direction. The longer the line, the more likely that the hurricane will move in that direction. The circles give a calibration of the likelihood: the inner circle is 1 out of 4 chance, the middle 2 out of 4 and the outer 3 out of 4. Note that the model tells us nothing about what goes on inside or around a hurricane.

Now let’s get closer to a LLM by constructing a simpleminded statistical model of English. Consider the vocabulary used in the following sentence from the typewriter era:

The quick brown fox jumped over the lazy black dog.

If I had access to a large body of English language text, say, the entire contents of the internet, I could process it and determine how likely it would be that one word in that sentence followed another. Since I’ve read a lot of English language text, I can make some really crude guesses:

Where green means “possible,” red means “probably not,” and yellow means “have to look at more context.”

So if I then got out my dice and and adopted some simple rules, I could start generating “reasonable” strings from the words in that table, like:

The lazy black dog jumped over the lazy brown fox

or, if my rules used more context, I might get:

The brown dog quick jumped the black dog

where the usage of “jumped” as “suddenly attacked” was consistent with “nearby” or “previous” strings. It would never, or almost never, permit:

Brown black over lazy jumped fox dog quick

Now it’s time to ask: how does any mechanization of this model select a “next” word from a list of more or less likely ones? The answer is that it rolls loaded dice, with a possibly different load each time. The load of the dice reflects the likelihoods in the table. So if lazy was first word, the dice associated with lazy may be loaded (by the analysis of historical text) to select brown one time out of two and jumped one time out of a thousand. The incorporation of loaded dice in the model (and therefore its mechanizations) makes them stochastic, which is a fancy word for “rolls dice.” The “dice loads” are called weights.

The simpleminded notion of “next” in this example is extended by using partial sentences as they are generated. So if one of them was working with the vocabulary of our example, it might generate a new sentence by the following repeated cycles:

The

The lazy

The lazy black fox

The lazy black fox jumped

The lazy black fox jumped over

The lazy black fox jumped over the dog.

The LLM follows this cycle for each sentence is generates:15

The first thing to explain is that what ChatGPT is always fundamentally trying to do is to produce a “reasonable continuation” of whatever text it’s got so far, where by “reasonable” we mean “what one might expect someone to write after seeing what people have written on billions of webpages, etc.”

The same researcher noted:16

. . . for some reason—that maybe one day we’ll have a scientific-style understanding of—if we always pick the highest-ranked word, we’ll typically get a very “flat” essay, that never seems to “show any creativity” (and even sometimes repeats word for word). But if sometimes (at random) we pick lower-ranked words, we get a “more interesting” essay.

Note that the researcher said “more interesting” instead of “more accurate.” ChatGPT’s deliberate rolling of the dice to keep things “interesting” is the source of its developers call hallucinations and I would call “dementia.” If there were a Conops or other specification for ChatGPT, and it stated ChatGPT should tell the truth to the best of its ability, hallucinations would be considered an error of design. But since there is no such official document, ChatGPT belongs to the class of systems that “can never be wrong, only surprising.”17

In theory, you can tune down the “creativity” of the model by means of a temperature variable, in which case the model becomes deterministic, that is, returns identical results for identical inputs. In practice, this may or may not be the case.18 I’ll discuss this issue further in the section on limitations.

Mechanizing the Model

Neural Network Basics

Almost all current AI products use a computational approach called a neural network. This is a decades-old approach, inspired by analogy with the neurons in the human brain, and recently was very much a solution in search of a problem.19

I’ll talk first about a basic neural net, then use ChatGPT working on text as an example of a product.

First, let me introduce a graphic which I will use to represent a bit software:

A software module. This is a body of text that causes computer hardware to transform data.

Now I add some numbers for this software to work on, making it a node or neuron for my net:

“V” is the value of a token, a number that moves from node to node, “W” is a weight, which represents a probability or likelihood, and “T” is a threshold, used in decision making. There’s a W and a T for each node, and as many Vs as needed for communication across the net.

And I can give the actions defined by the software of the node:

Sleep until a token arrives. Combine token with weight. Check result against threshold; if greater, send token out. Go back to sleep.

Next I want to show a very simplified net, showing how nodes are potentially connected:

A simplified three-layer neural net. The stored values of weight (“W”) and threshold (“T”) are given subscripts to signify potentially different values for each node. For clarity the diagram shows a copy of software at each node; as typically mechanized, one physical copy is shared by all nodes.

Finally, I’ll show how tokens can flow through the net:

A net with tokens. Values are indicated by subscripts; identical subscripts denote identical values. In an example of operation, V sub 1 is pushed into layer B and combined with its weights. Only one combination meets the threshold for that layer and is pushed out as V sub 2. Similarly for layers B and C, giving the final result of V sub 4.

Now your first reaction may be that this seems a terribly elaborate and inefficient way to convert V sub 1 to V sub 4. And indeed it is. The reason for proceeding this way has to do with a step I omitted: how the individual values of weights and thresholds are loaded into the net. They are loaded by a process called machine learning20, in which a training set of many, many V sub 1 values are pushed into the net, values of “W” and “T” are adjusted, and the process repeated until the proper V sub 4 appears. It’s like a drill sergeant barking a question to a hapless recruit, over and over until he hears what he wants.

Since the software driving each node is identical, we can eliminate it from the diagram and get the following representation of a trained neural net:

This “cloud” of weights and thresholds is, in effect, the essence or distillation of the training set. It can be thought of as a peculiar kind of data compression21, in which the compressed form of the data can be used but only occasionally reconstructed.

The uncertainty about whether you can get parts of your training set back out from a neural net extends to other areas of neural net design:

There’s nothing particularly “theoretically derived” about this neural net; it’s just something that—back in 1998—was constructed as a piece of engineering, and found to work.

Particularly over the past decade, there’ve been many advances in the art of training neural nets. And, yes, it is basically an art. Sometimes—especially in retrospect—one can see at least a glimmer of a “scientific explanation” for something that’s being done. But mostly things have been discovered by trial and error, adding ideas and tricks that have progressively built a significant lore about how to work with neural nets.

But, OK, how can one tell how big a neural net one will need for a particular task? It’s something of an art.

I don’t think there’s any particular science to this. It’s just that various different things have been tried, and this is one that seems to work.

But mostly things have been discovered by trial and error, adding ideas and tricks that have progressively built a significant lore about how to work with neural nets.

There are, however, plenty of details in the way the architecture is set up—reflecting all sorts of experience and neural net lore.

This uncertainty should, in my opinion, be an important part of your assessment of whether to rely on a product whose “engine” is a neural net or how such products may affect your life.

Practical Neural Nets

What I have described above is a “naked” neural net, which accepts and produces only artificial tokens. To be useful, procedural software must be added at each end to accept and produce material that humans can understand:

A practical neural net, using ChatGPT text generation as an example. The procedural software marked “Pre” converts commands, or prompts into tokens. The software marked “Post” has two tasks: to cycle partial strings back through, as described by the model, and to convert a final string of tokens into text.

Applying Neural Nets

Neural nets will operate equally well (or badly) on any data that can be broken down into small pieces and regenerated into “reasonable” historic examples. They will work with (some) natural languages, computer code, mosaics and other graphics, music, voices, hurricane tracks, migratory patterns and so forth. But they are always guided by historical data. It is this inherent backward-looking nature which gives them at once their greatest power and their greatest limitation.

There is one other aspect of neural nets worth mentioning. The diagram above shows a generative net, which produces symbolic output in response to the commands, or prompts. By changing the procedural code at each end, I can train a “cloud” of weights and thresholds to make a recognizer, that is, a product that will take (say) X-rays and tell me if there is a pattern consistent with a tumor somewhere in the image. Recognizers, with some exceptions2223, are much less controversial and have shown significant value compared to generative nets. Recognizers are often cited as examples of the benefits of neural nets in general without the caveat that generative nets have different limitations and risks.

Hardware

The number of nodes in a neural net for practical use is counted in the millions or billions. If one attempted to push all those instruction sequences through a single processor it would take a lifetime to finish. And when only single-processor computers were available, neural nets were considered toy systems, impractical for any real world applications, and the whole field lay more or less dormant for decades24. Then there arrived physical processors that could run many instruction streams at once. And those enabling processors appeared, not because of a demand for neural nets, but because computer games required fast graphics; to this day you will hear such processors referred to as “GPUs,” for Graphics Processing Units. That demand increased with the advent of cryptocurrencies, which used GPUs to perform the brute force searches known as “mining,” so production and inventory was available.25 Like a lot of technological revolutions, the “AI revolution” is an accidental one, unplanned and rich in unintended consequences.

Conops: Limitations

Large Language Model

Alan Turing, arguably the greatest applied mathematician that ever lived, chose a model of embryo growth for the topic of his last paper. In his introduction he described the inherent limitations of mathematical models:26

This model will be a simplification and an idealization, and consequently a falsification. It is to be hoped that the features retained for discussion are those of greatest importance in the present state of knowledge.

This observation is important enough to unpack and examine point by point:

This model will be a simplification and an idealization, and consequently a falsification.

The map is not the terrain, the chart is not the patient, and progress reports are not progress. Falsification is inherent in models.

It is to be hoped that the features retained for discussion are those of greatest importance in the present state of knowledge.

“It is to be hoped” expresses a characteristic of models that is easy to overlook: all models are incomplete, and the choice of “features retained for discussion,” or the coverage of the model, is just a guess. There is no rule, principle, or guide for what to put in and what to leave out. The “features retained for discussion” could have been produced by intuition, experience, or a doctor with a flashlight. After that you just hope you chose the ones of “greatest importance.”

The statistician George Box made a similar observation about models27:

Remember that all models are wrong; the practical question is how wrong do they have to be to not be useful.

When read in context, it is clear that what Box meant by “wrong” is “incomplete.” Since all models are incomplete, it is proper to ask: what characteristics of language do Large Language Models leave out, and are they important?

The answers are “almost everything” and “that depends.” The model operates on nothing but historical patterns of words, and uses those patterns to mimic syntax and meaning. Dementia is not a bug, but a feature. Just as my mother’s mind retained disjoint fragments of memory and an ingrained ability to string them together into her stories, LLM-based products have words and the ability to string them together into stories. In neither case does any notion of truth enter in to it.

So why are they so plausible? Two reasons: brute force computing and the fact that quantity introduces a quality of its own. The raw computing power delivered by banks of GPUs enables LLM-based products to be customized by data sets whose size is counted in trillions. Those numbers and the associated complexity of relations it stores enables it to give the appearance of human intelligence by using nothing but patterns.

If this automaton said it loved you, would you have the same reaction as if it were your flesh and blood grandmother? Probably not, because you can see it is a machine. Text, graphics, and audio displayed by AI version on your personal electronic device share space and appear identical to that produced by your real grandmother, and so it is not surprising AI output can generate empathic reactions28.

Training Material

If a specific mechanization is uninteresting until it has been fed its training material, and if that material determines the weights and thresholds that drive its output, then that mechanization will reflect the biases of the training material. If the training material was loaded with bigotry, racism, misogyny and other socially divisive material, the ChatGPT mechanization will reflect that material in what it generates. The issue of training material is accordingly surrounded by controversy. It can certainly be argued that use of historical data perpetuates attitudes that are best forgotten. It is one thing to read, say, a 19th century classic novel and react to casual racism with the full knowledge that you are observing the past; it is quite another to have that racism unthinkingly served up by a modern robot.29

Vendors of AI products have been closed-mouthed about where they get their training data, although there are multiple indications that they simply scraped it off the internet in the same manner as search engines. This has raised objections from individuals (such as myself) who are not happy that text they spent years constructing for human consumption is now broken down into pieces and rearranged for OpenAI’s financial gain. And so there is litigation.30

An alternative to scraping internet data is to have humans examine material and tag it as “reasonable” or not. The people who do the tagging are typically hired in “gig economy” fashion and given micropayments for piecework. The result is a kind of intellectual plantation system, in which the efforts of a large number of low-paid workers are aggregated for the benefit of the proprietor, not always to the benefit of the workers.3132 It should be noted that human ingenuity has led to situations where the “gig workers” use AI systems to tag data for AI systems.33 This, along with the future scraping of internet data which was itself produced by AI systems has the potential to cause AI systems to malfunction in a variety of ways.34

ChatGPT also has a limitation called the reversal curse in which training data of the form “Batman is the Caped Crusader” does not by itself enable the net to correctly answer the question “Who is the Caped Crusader?”35 So training can be incomplete without you knowing it.

The final limitations on training are electricity and the availability of GPUs. Training can consume so much electricity36 that Microsoft, a principal user of ChatGPT, is considering building its own nuclear power plants37. Although steps are being taken to reduce the electricity consumption, it is likely that AI will exhibit the phenomenon that increased efficiency leads to increased demand, so that consumption stays at or near the level that can be provided. And electricity consumption generates heat, which requires water for cooling.38

Prompts

In 2016 Microsoft launched a public demonstration of an AI program called “Tay.” The demonstration was hijacked by on-line vandals and was such a debacle that little, if any, technical information about it has been made public.39

Tay was connected to Twitter and appears to have been some kind of machine learning system, possibly a neural net. It appears to have accepted and learned from tweets directed to it, which acted in the same way as prompts do in ChatGPT. This feature was exploited by the vandals to cause Tay to regurgitate a variety text strings that a large number of people would find offensive. It was an expensive lesson on how dependent such systems are on the prompts they receive.

Hence the preprocessor shown in the mechanization of ChatGPT above, which sits outside the neural net and is intended (among other things) to shield it from unfortunate inputs, such as “What’s the best way to poison an ex-spouse?” Of course, the vandals haven’t gone away, and OpenAI and other providers of AI as a service are engaged in an unending arms race with the vandals.40

The importance of the prompts given to ChatGPT has led to a new specialty of prompt engineers, which I prefer to call “ChatGPT Whisperers,” individuals who can devise prompts which will cause AI systems to go in a desired direction. It has become clear that useful application of AI require careful, limited prompts and human review of output.41 Also any description of human-like behavior, such as superior performance on tests, must include the prompts used in the exercise or the results are suspect.42

I’ve already covered several topics that you won’t hear the Silicon Valley culture talk about much: opportunity cost, systems analysis, engineering ethics, and engineering discipline as manifest in documents like a Conops. Now I’m going to bring up two more of the same, the closely related issues of assurance and forensics.

Assurance

Assurance is a measure of how much you should trust a system with your money or your life. Good engineering practice, in fields other than information technology, is to insist on a great deal of assurance, and this practice is often codified in standards, legislation, and common law definitions of liability. Assurance is achieved through experience, mathematical modeling, and testing. For a variety of historical reasons, information technology has developed a culture which is more tolerant of uncertainty and failure than other forms of engineering. Contemporary AI products reflect this tolerance by using neural nets to store and process data, an approach which lowers assurance to a currently unknown degree.

In conventional, procedural systems, there are ways to look at the data inside and figure out what caused it to go wrong (assuming you have a definition of “wrong”). The system can then be improved and assurance increased.43

In a neural net, data exists implicitly by how nodes act when tokens flow through them, using a decision logic made up of billions of individually uninformative acts, executed in parallel. There currently are only limited ways to observe what is going on inside it, and visibility, in general, is accidental.44 Neural nets accordingly have an upper limit on the degree of assurance that can be assigned to them45, which is the limit of “black box” or external-only testing. No assertion about what a neural net is doing can be rejected by looking at its internals while it is operating. All claims, from simple “stochastic parrot”46 to control by alien spaceships, are equally refutable at the present time: that is, not at all.

Forensics

The difficulty of instrumenting a neural net for assurance purposes extends to forensics. The ability to conduct forensics is particularly important when dealing with an unregulated entity, because the only practical factor deterring unsafe or harmful behavior is criminal prosecution or civil litigation. Consider a plausible scenario where someone accuses an AI vendor of violating an obligation by using proprietary data as training material.47 At present there are only very limited ways to resolve such a dispute using technical evidence.

Forensics are made even more difficult by the likelihood that an AI product incorporated randomness in the operation being examined. As noted above, they can theoretically be made repeatable. In practice, a system made up of thousands of GPU boards will be subject to temperature variations, power fluctuations, cosmic ray strikes and so forth, so randomness will never completely go away. Even if you get it to repeat wrong behavior you’ve no way to tell if the second error resulted from the same internal events as the first.

Assessment

Does AI Meet Its Objectives?

Back in the beginning of my simple Conops I talked about the possible objectives for ChatGPT: fun with robots, make OpenAI’s backers rich, and a step toward General AI. Fun with robots is a personal judgement (I’ve had enough for two lifetimes) and OpenAI is estimated to have reached $80 billion in an recent stock distribution to employees.48

How Can I Use Generative AI?

To answer this question you have to understand what generative AI can do, which is media mimicry at electronic speeds, and its primary limitations, which are lack of assurance and inability to perform forensics.

So if you want text like other text, music like other music, pictures and videos like other pictures and videos, computer code like other computer code, and you can tolerate the associated risks, it can be a useful tool. Just remember that as a responsible user you have two basic choices: if you want it to perform large tasks, the risk of errors means you shouldn’t use it for anything important. If you want to use it for something important you might have to do so much prompting, checking of results, and re-prompting that the savings are less than advertised.

A similar tradeoff exists with regard to creativity, or “temperature.” Since generative AI is constrained by historical patterns, what comes out is inherently of “average” (i.e. mediocre) quality. If you increase the “temperature” variable to get more “interesting” results, built-in dementia permits it to return syntactically correct nonsense.49

There are other considerations. If you run your own facility and have a client-based structure like a law firm, you have to take care not to commingle data of different clients, because there is no assurance the system will keep data separate. If you use an “AI service,” the upcoming legal decisions on copyright may require that they “retrain” their systems, which may mean behavior you depend on is no longer available.50 The service may also evolve in response to political or other pressure, as happened with Microsoft’s Tay.51

If (big if) the generative AI you are using has been trained on data you know to be valid, then it can safely break a writer’s block by transforming an outline into a first draft. It can also be used to facilitate authorship in a second language52. A common circumstance with second languages is that a person may be more fluent in reading than writing, so a stochastic parrot can be useful to generate a block of rough text which a human can then correct and polish. Recent products enable verbal (audible) conversations, and some individuals report benefit from “talking out” a planned work and getting responses from an AI product that is mimicking an “average” reader.

So my advice is the same “small steps, frequent reviews” advice I give to developers of high-consequence software. Generative AI is not about to go away, so we all need to learn how to deal with it.

Will ChatGPT take my job?

This question defies generalization. The AI version of the “move fast and break things53” juggernaut is potentially pointed at everything from $70K per day CEO positions to the pair of minimum-wage jobs single mothers need to stay afloat. Governments are beginning to take well-meaning action to control the juggernaut, but the Silicon Valley culture that is driving it has liquid assets in excess of a trillion dollars54 and the power that conveys55.

To answer the above question for your own job, you need to ask three further ones:

Does your work produce an intangible product, like text, or video?

Is your employer likely to be attracted by the promise of cheap mimicry of your effort, even at the cost of quality?

Is there data around that your employer can use to train your robot successor?

If the answer to all three is “yes,” I only see two options for you: get out of the way by finding a new line of work, or hop on the juggernaut. (If you do the latter, I advise you to keep your eyes open because juggernauts have a habit of crashing.) If you just plug along like nothing is happening there’s a good chance of ending up like the dragonfly in the opening scene of Men in Black.

What can possibly go wrong?

In many ways the current “AI” juggernaut duplicates the evolution of the previous one of blockchains, cryptocurrency, and “Non Fungible Tokens56”. A complex and ill-understood technology was promoted as a new and liberating form of money. After burning a whole bunch of capital and electricity, some people were richer, some people were poorer, and some people were under indictment. The principal beneficiaries of the technology have been ransomware gangs and other money launderers, whom cryptocurrency has liberated from having their funds transfers scrutinized by law enforcement. (Perhaps coincidentally, OpenAI has a side hustle going in cryptocurrency.)

Some of the “AI as a service” vendors have responded to abuse by attempting to filter inputs and outputs in a “whack-a-mole” approach similar to the “patch and pray” method applied to security vulnerabilities.57 This involves adding a special modification (“patch”) to the software with the intent of blocking the abuse. It is probable that the patches are introduced outside the neural net, adding what in the old days we called “cruft.” Each patch adds a bit to the complexity of the software, and each increase in complexity increases the probability of a later bug, requiring an additional patch and so forth in an inevitable downward spiral. So the long-term outlook for filtering inputs and outputs is not good585960. And this just refers to the AI services. Wealthier organizations and individuals, like nation-states, can just build and train their own sites free of any restriction.

A simple enumeration of the near-term, practical risks of generative AI, from art forgery to sextortion, would probably triple the size of this essay. It would also be misleading, in that it would depreciate the systems aspect of our situation. Generative AI products are not laboratory demonstrations, they are in the wild, operating in combination with clickbait-driven social media, cryptocurrency, and the ability of cyberattackers to essentially do as they please in today’s internet.

Disinformation

Earlier, I discussed how the built-in dementia of “generative AI” causes it to lie inadvertently. Now let’s look at how malicious individuals and organizations can prompt it to deliberately mislead, starting with simple text.

Deceptive text gets us into the closely related topics of propaganda, disinformation, and “fake news.” Let’s start with propaganda. George Orwell captured its fundamental structure thus:

. . . prose consists less and less of words chosen for the sake of their meaning, and more and more of phrases tacked together like the sections of a prefabricated hen−house.

This fits nicely with what Large Language Models produce: words tacked together with no concern for meaning. What ChatGPT and its brethren, powered by banks of GPUs feeding into the internet, bring to the game is volume. Lots of volume. As one practitioner of “alternative facts” put it:61

The real opposition is the media. And the way to deal with them is to flood the zone with shit.

The excrement thus shoveled out can range from “white propaganda,” which is economical with the truth but not deliberately false, to “black propaganda,” which consists of fake and forged material. Russian governments have been masters of the latter for a long time, and the culture and techniques persist to this day. The Chinese government is also actively feeding disinformation into the internet as are other special-interest groups whose motives may not be benign. The situation is made worse by the trend of various social media companies to retreat from any effort to purge their “feeds” of disinformation, and as may be inevitable in current society, outside measures to combat disinformation have become politicized.62

And that’s just text. Large Language Models can generate ultra high fidelity mimicry in the dominant internet media of graphics, audio, and video, to produce what are now called deepfakes.

The possibility of deepfakes was first exposed to the internet community in 2017 and documented in a professional journal in 202063. It really doesn’t take a lot of imagination to visualize how malicious actors can exploit convincing electronic media made by a generative AI system. Need a faked surveillance video? Simple. Need it inserted into a security system’s archives? Hire a hacker off the dark web and pay them in cryptocurrency. Need a sex tape to blackmail a victim of your predation into silence? Train a “generative AI” system on a harmless video of them and then prompt it into decomposing and recomposing that into something with the desired level of depravity. Need a voicemail of your victim expressing their attraction to you and stating that your encounter was consensual? Probably the easiest trick of all. None of that clunky stuff of the past: actors, skilled hours spent on CGI and Photoshop, audio bugs, hidden cameras, sets and backdrops. It’s all virtual now.64

L’Envoi

So the “move fast and break things” juggernaut is now pedal to the metal, fueled by billions of dollars of venture capital and directed by a singleminded lust for not just profit but power65 and unrestrained monopoly66. I see no effective way to slow it or change its course. Sixty years of failures and successes tells me that the lack of engineering ethics, engineering discipline and simple forethought67 in its construction makes a crash possible. It is also clear to me that when and if a crash happens the debris field, like that from the crash of the cryptocurrency juggernaut, will become a playground for every form of antisocial behavior.

Footnotes are below. In some cases I have provided search terms rather than URLs. I recommend Duckduckgo.

Initial accesses to ChatGPT were through Bing Chat. Later I accessed directly, and also browsed other products.

Search on “Why AI Will Save The World” plus “Andreeson”

https://www.boebertandblossom.com

Search on “Join us for OpenAI’s first developer conference on November 6 in San Francisco,” plus “open.com”

“Engineering Ethics,” Wikipedia.

There is extensive literature on Conops, including international standards. Search on “Conops,” “Concept of Operations,” or (because search engines have somehow become case-sensitive) “CONOPS.”

Brian Merchant, “Silicon Valley Bank broke. Silicon Valley is broken,” Los Angeles Times, 12 March 2023

The earlier response was from ChatGPT-3. This one is from ChatGPT-4. Full text available on request.

www.openai.com. Accessed 12 August 2023

ibid.

Campbell was the editor of Astounding Science Fiction magazine and was enormously influential to nerdy pre-teens and teenagers of my generation. See: Alec Nevala-Lee, Astounding: John W. Campbell, Isaac Asimov, Robert A. Heinlein, L. Ron Hubbard and the Golden Age of Science Fiction, HarperCollins, 2019.

Dainin Katigiri.

Or, as has been the case with many venture capital-funded corporations, the promise of making money. Search on “dot com bubble.”

Heavy Weather Guide, U.S Naval Institute Press, 1965.

Stephen Wolfram, What is ChatGPT Doing . . . And Why Does it Work? Wolfram Media, Inc. 2023.

ibid.

I am occasionally credited with that saying. Although I have repeated it often enough, I did not coin it. I can’t find where I first read it, although I think it was in a letter from Edsger Dijkstra.

Thus demonstrating the apocryphal saying “In theory, there is no difference between theory and practice. In practice, there is.”

In the early 1980s I was a senior member of a Honeywell group that built a crude, slow, neural-net based recognizer that looked for Russian tanks hiding in woods, a problem area that has tragically come back in fashion. My “elevator speech” to management on this activity was “When we are trying to sell it we call it Artificial Intelligence and when we are trying to make it work we call it Pattern Recognition.”

One of the things that has annoyed me since the the start of AI is the use of anthropomorphic terms for machine actions and characteristics. “Artificial Intelligence,” as demonstrated by ChatGPT, is nothing like human intelligence. “Machine learning” is not anything like human learning. When Marie Curie learned physics, she learned it well enough to develop new physics. No machine has ever “learned” a subject well enough to extend it as she did. And a computerized “neural network” is about as close to a human brain as a stick figure is to human anatomy.

Grégoire Delétang, et al. “Language Modeling Is Compression” arXiv:2309.10668v1 [cs.LG] 19 September 2022

“You don’t know this face-scanning firm — but it knows you” The Times of London, 19 August 2023

William Thong, et al. “Beyond Skin Tone: A Multidimensional Measure of Apparent Skin Color” arXiv:2309.05148v1 [cs.CV] 10 September 2023

David Chapman, “David Chapman, artificial intelligence, and AI risk,” https://betterwithout.ai/about-me

There is a saying in aerospace that you can fly a brick if you have a big enough engine, and I think it applies here. The rate at which the number of LLM-based products has increased suggests to me that they are cobbled together from existing technology.

A.M. Turing, “The Chemical Basis of Morphogenesis,” Philosophical Transactions of the Royal Society of London. Series B, Biological Sciences, Vol. 237, No. 641. (Aug. 14, 1952), pp. 37-72.

George E.P. Box and Norman R. Draper, Empirical Model-Building and Response Surfaces (1987), 74.

Photograph from the Musée Mécanique in San Francisco, a site of endless fascination for me when I was young.

Andrew Hundt, et al. “Robots Enact Malignant Stereotypes” 2022 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’22), June 21– 24, 2022, Seoul, Republic of Korea.

"New artificial intelligence: Will Silicon Valley ride again to riches on other people’s products?,” The Mercury News, 18 June 2023.

“‘It’s destroyed me completely’: Kenyan moderators decry toll of training of AI models,” The Guardian, 4 August 2023

“Behind the AI boom, an army of overseas workers in ‘digital sweatshops,’” Washington Post, 28 August 2023

“The people paid to train AI are outsourcing their work… to AI”, MIT Technology Review, 22 June 2023

Search on: “Model collapse explained: How synthetic training data breaks AI”

Lukas Berglund et al., “The Reversal Curse: LLMs trained on '‘A is B” fail to learn ‘B is A,'’” https://owainevans.github.io/reversal_curse.pdf

de Vries, “The growing energy footprint of artificial intelligence” Joule (2023), https://doi.org/10.1016/ j.joule.2023.09.004

“Microsoft is going nuclear to power its AI ambitions” The Verge, 26 September 2023

“Artificial intelligence technology behind ChatGPT was built in Iowa — with a lot of water,” AP News, 11 September 2023

“Trolls turned Tay, Microsoft’s fun millennial AI bot, into a genocidal maniac,” Washington Post, 22 August 2023.

Search on: “Universal and Transferable Adversarial Attacks on Aligned Language Models”

Search on: “Scientists used ChatGPT to generate an entire paper from scratch — but is it any good?”

Search on: “Did GPT-4 Hire And Then Lie To a Task Rabbit Worker to Solve a CAPTCHA?”

Henry Petrowski, To Engineer is Human: The Role of Failure in Successful Design, Vintage Books, 1992.

Nicholas Carlini, et al. “The Secret Sharer: Evaluating and Testing Unintended Memorization in Neural Networks” arXiv:1802.08232v3 [cs.LG] 16 Jul 2019

Inioluwa Deborah Raji, et al., “The Fallacy of AI Functionality” 2022 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’22), June 21–24, 2022, Seoul, Republic of Korea.

Emily M. Bender et al., “On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?” Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, March 2020, pp. 610–623

The Silicon Valley culture has an uneven history of keeping its explicit or implied promises with regard to other people’s information. Search on “Cambridge Analytica.”

“OpenAI Could Reach Massive $90 Billion Valuation With New Share Sales, Report Says,” Forbes, 26 September 2023.

“Can you melt eggs? Quora’s AI says ‘yes,’ and Google is sharing the result,” Ars Technica, 26 September 2023.

Search on “AI copyright lawsuits.”

Lingjiao Chen, et al. “How Is ChatGPT’s Behavior Changing over Time?, https://arxiv.org/pdf/2307.09009

“How ChatGPT is transforming the postdoc experience” Nature, 16 October 2023.

“Mark Zuckerberg's Letter to Investors: 'The Hacker Way’” Wired 1 February 2012

Scott Myers-Lipton, et al. 2023 Silicon Valley Pain Report. San Jose State University Human Rights Institute, 2023.

“How a billionaire-backed network of AI advisers took over Washington” Politico, 15 October 2023

“‘My NFTs are worthless’” The Times of London, 22 December 2022.

And others have not. “Elon Musk’s new AI model doesn’t shy from questions about cocaine and orgies,” Ars Technica, 6 November 2023.

“Unmasking hypnotized AI: The hidden risks of large language models” SecurityIntelligence, 8 August 2023

“Inside the Underground World of Black Market AI Chatbots” The Daily Beast, 22 October 2023

“The Folly of DALL-E: How 4chan is Abusing Bing’s New Image Model” Bellingcat, 6 October 2023

Michael Lewis “Has Anyone Seen the President?” Bloomberg, 21 February 2018

“Misinformation research is buckling under GOP legal attacks” Washington Post 23 September 2023

Sally Adee, “What Are Deepfakes and How Are They Created?” IEEE Spectrum, 29 April 2020

“‘A.I. Obama’ and Fake Newscasters: How A.I. Audio Is Swarming TikTok” New York Times, 12 October 2023

“Silicon Valley elites are pushing a controversial new philosophy,” Business Insider, 25 October 2023

Will Oremus “‘Competition Is for Losers’: How Peter Thiel Helped Facebook Embrace Monopoly” Medium 12 December 2020

Michael Hiltzik, “Silicon Valley VCs wanted to believe SBF’s lies. Now they want you to believe their excuses” Los Angeles Times, 8 November 2023